Why Writing Too Well Can Get You in Trouble

Written By Ritika Sharma

Thumbnail and Banner Photo by Mahnoor Faisal on Make use of AI

The rise of artificial intelligence has birthed a paradoxical challenge for modern writers. As academic and professional institutions lean on automated detection tools to verify authenticity, these systems are increasingly flagging human excellence as machine-generated. Ironically, the same qualities that define great writing, such as clarity, structure, and precise vocabulary, are the exact patterns that trigger digital alarms. When historical pillars such as the Bible or the Declaration of Independence fail an authenticity test, it reveals a fundamental flaw in how we judge originality. This shift creates a high-stakes environment where students and professionals alike find themselves under suspicion not for a lack of skill, but for writing too well.

The Bible is the AI era's greatest false positive

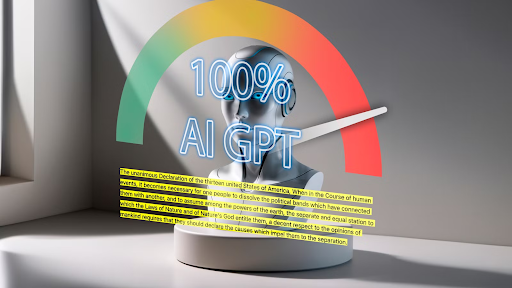

The Hebrew Bible was completed around 100 CE, meaning it predates modern AI by roughly 2000 years. Despite this minor timeline issue, AI detectors like Zero GPT have flagged passages from the Bible as almost 100% AI-generated. According to the software, ancient scribes were either time travelers or extremely efficient, prompt engineers.

Photo by Mahnoor Faisal on Make use of AI

The real reason is less exciting. The Bible is structured, repetitive, and influential, which are exactly the patterns AI models learn from. When a detector confidently labels one of the oldest and most studied human texts in history as machine-written, it becomes clear that the tool is not detecting AI so much as panicking when it sees good writing.

When the Algorithm Accuses You

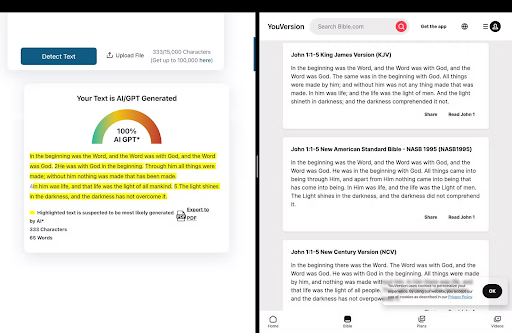

Picture this. You submit an essay that you worked very hard on. You researched carefully and wrote every sentence yourself, following the rubric. Your professor runs it through an AI detection tool.

The result comes back as 99 percent AI-generated.

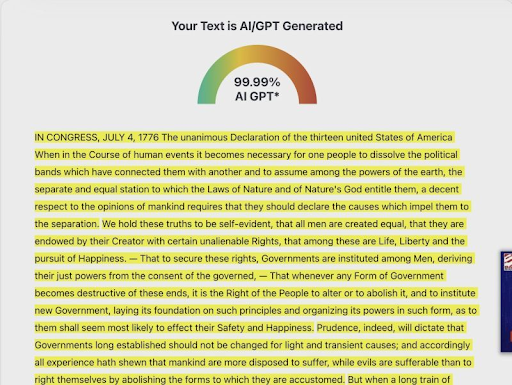

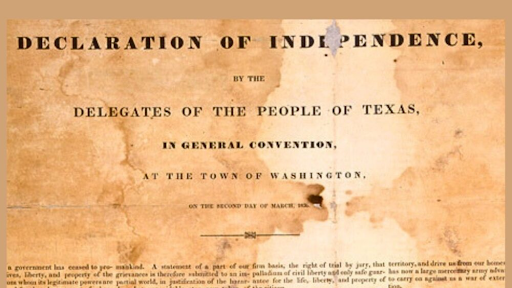

At that moment, it does not matter how confident you are in your own work. A machine has spoken, and its verdict appears final. What makes this scenario even more concerning is that the same tools have flagged historical documents such as the Declaration of Independence as almost entirely AI-generated. If a text written in 1776 can fail an authenticity check, it may be time to reconsider how reliable these systems really are.

AI detection tools are now highly used in academic and professional settings, often treated as neutral arbiters of truth. In reality, they are far less dependable than the authority suggests.

Why AI Detection Tools Struggle With Human Writing

AI detection software does not understand ideas, originality, or effort. Instead, it looks for patterns in sentences, structure, vocabulary, and predictability. Writing that is clear, formal, and well-organized often triggers systems because it resembles the polished text that AI models are trained to produce.

This creates a strange contradiction. The better a student writes, the more likely their work may be flagged as suspicious. Academic writing, by design, values clarity and consistency. Detection tools often mistake those qualities for artificial generation.

The result is a system that penalizes strong writing while claiming to protect academic integrity.

When Machines Are Trusted More Than People

One of the most troubling aspects of AI detection is how decisively its results are often accepted; a numerical score feels objective, even when the tools offer no meaningful explanation of how it is actually calculated. Students are then placed in uncomfortable positions where they have to argue against an algorithm rather than engage in a conversation about their work.

When a professor believes a machine over a student without further investigation, the issue stops being about integrity and becomes about misplaced trust.

Technology should serve as a diagnostic aid for human judgment, rather than a replacement for it.

The Privacy Problem Few Students Know About

Beyond accuracy, another issue deserves attention. Many detection tools upload scanned assignments to external servers. In some cases, this data can be stored or reused to train future models. This creates a self-perpetuating cycle where student writing becomes fuel for the same technology that later evaluates it

For students, this is a serious question about consent, ownership, and privacy. Essays are not just assignments. They are intellectual work. The idea that they can quietly become part of an AI training data set is something students should be aware of, not discover after the fact.

Why This Matters Even If You Never Use These Tools

Most students will never open an AI detection website, but that does not mean these tools have no impact on them. Detection software influences grading decisions, academic misconduct investigations, and how student work is perceived.

A false positive can cause stress, damage trust, and force students to defend work they genuinely did. That alone makes AI detection a student issue, not just a faculty concern.

What Students Can Do

This article is not arguing that the AI detection tool should be banned; it argues that they should be treated with caution. Students should benefit from learning how to critically evaluate writing, understand limitations, and advocate for transparency when these tools are used.

Human judgment, discussion, and context matter. An algorithm can use those processes, but it cannot replace them.

If a tool can confidently accuse one of the most famous human-written documents in history of being AI-generated, it should not be the final authority on student work.